Using Rust and Nom to create an open source programming language for chatbots

We just open sourced CSML, a new and innovative way to create chatbots based on a very simple programming language we crafted for the occasion. Here are some thoughts on why we chose to build it with Rust and Nom!

At Clevy, we obsess about creating the best possible tooling for every chatbot creator. We figured that developers prefer writing code than using obscure and limited drag and drop interfaces, but the existing frameworks were simply too limited and required too much work before getting anywhere, so we set out to design a new programming language for this very purpose, and launched the open beta of CSML (Conversational Standard Meta Language) this past November.

Today, we are opening the source code of the CSML interpreter so that everyone can see how it works under the hood, use it in their own projects or even contribute if they feel like it. But more importantly, we feel this is a good resource for educating about a cool use case for a custom programming language!

If you would like to read more about the language, feel free to visit the CSML website and its very thorough documentation. You can try it out in our free online development studio and follow along some “getting started” examples as well! We also have some more chatbots available on Github, so make sure you have a look there as well.

In any case, feel free to star the repo on github, it would mean the world to us!

How CSML works

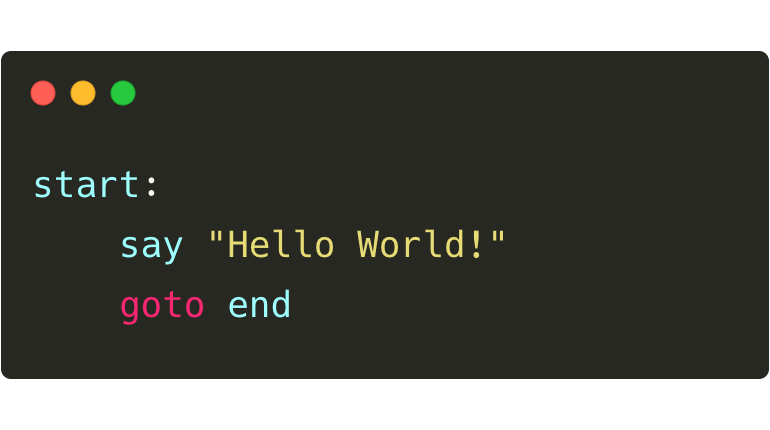

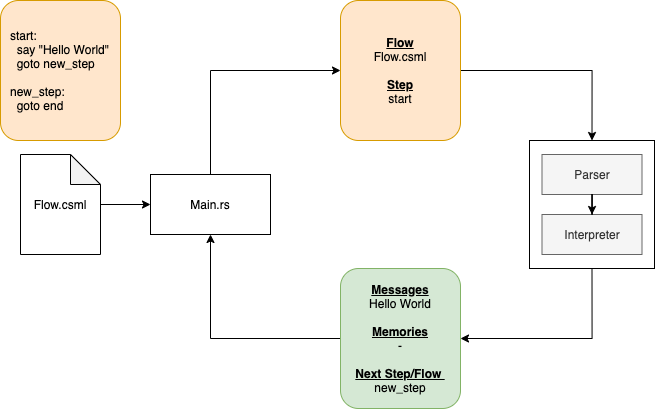

CSML is an interpreted language: this means that your code is not compiled but rather it will be parsed and, well, interpreted, at runtime.

It requires two things: some flows written in CSML and a user event (something the user wants to tell the bot). The flows will be analyzed as requests are coming in so that your bot is able to decide what to respond to the user.

The language is designed to be learned very quickly even by non-developers. You can find a few example bots on Github, such as this one, precisely built to help someone look for interesting Github repositories!

We also released a CLI client for the CSML studio if you want to quickly try it out from the comfort of your terminal.

Choosing Rust

When we started developing the language, our main promise beyond the quality of the language itself was to be able to run CSML with a safe and effective binary on any platform. Many languages fit that purpose, but we were drawn almost immediately to Rust. It is advertised as fast and safe, it can easily be built to run on any machine with a very low footprint (very important for IoT or offline uses, but also compatible with large-scale, cloud-friendly deployments). It also seemed very fun to use: as you surely know, Rust was voted most loved language several years in a row in the StackOverflow developer survey!

Whilst Rust is very strict in its syntax and has a steeper learning curve than some other similar languages we could have picked (Go for example), it also forces you to make good design choices and gives you confidence in how it will behave in production. We are still a small team working on this project, but have only experienced very few major kinks and bugs for a project of this size (now used in thousands of daily conversations by major customers on all sorts of clients).

One of the core features we were interested in with Rust was the ease of integration with other systems and languages, through the Foreign Function Interface (FFI). We wanted to be able to offer the broadest possible support for our users and customers, as we expect use cases around IoT or even offline (we have received interest from airlines and airplane manufacturers, for instance), wit requirements for a low energy footprint and very specific OS requirements. We also commonly build it ourself into Node.js programs, and although it did take a bit more time and effort than we expected, we now have a solid build pipeline for this very use case.

What we did not expect was the impact of using Rust would have on our recruitment pipeline: we are now receiving a spontaneous stream of quality Rust developer resumes willing to join a cool, open-source, out-of-the-ordinary Rust-oriented project with a real-world impact. Given today’s technology landscape with a huge unbalance between supply and demand for developer jobs, this is simply something we don’t usually experience with more standard stacks!

Creating the CSML parser with Nom

The main library we are using in the CSML interpreter is Nom, which is one of Rust’s go-to parsing libraries. It was initially built for parsing binary formats, but it works just as well with text files and programming languages (someone actually built a PHP parser using nom!).

We evaluated other libraries such as LALRPOP or Pest, but we found Nom to be more flexible. We have been defining the syntax at about the same time as we were implementing it, and Nom makes it easy to create small parsers for small things, which makes it a very good choice for experimenting! We also found it to be very scalable as we added more rules, or changed large pieces of the CSML specs.

The community aspect is also an important part of the equation when it comes to selecting a technology. We already knew Rust had an outstanding community (which I believe contributes to the reason why people love Rust), and Nom also has the broadest community, resulting in being able to find more help when we were stuck somewhere.

Nom is very actively maintained. While we were working on the interpreter, Nom 5, a new major version of the library, was released, fixing most of the shortcomings we experienced (mostly around error handling, type inference and readability of the cumbersome macro syntax). It only took us a couple of days to upgrade from Nom 4 to Nom 5 and it almost immediately paid back in productivity improvements!

What’s next?

Now that the CSML interpreter is openly available to everyone, we are going to continue to work heavily on the language. The last big feature we released was the CSML Standard Library, a bundle of built-in utility helpers to make it easier to develop CSML chatbots .The next major item we want to work on is improving our error handling, among others by adding a linter issuing warnings and errors, making it easier to develop chatbots in CSML. In any case you can find our open roadmap on Trello!

To learn more about the CSML Programming Language, read the docs, get a free developer account on CSML Studio, follow us on Twitter, and of course…

Discover the CSML interpreter on github!